Module 7: Reinforcement Learning

Topic 3: Implementing RL

How do I actually implement an RL algorithm?

All this theory is interesting (or not, depending on how much you enjoy theory) but what you really want to know is how to actually implement RL, especially for the project (assuming you choose the RL path for the project and not the GA path). Or maybe you have seen that RL has had some amazing real-world success stories and you wonder how they can achieve that? I will discuss Alpha-Go briefly in the next topic but this topic focuses on some practicalities of implementation.

Choosing a state representation

One of the keys to implementing RL successfully, especially in something like your project, is to pick a good state representation. When we discussed MDPs, we discussed that the theory of the RL algorithms assumes a Markov representation of the state. However, this is not practical in most real-world applications! Do we just give up? No! We approximate it, as best we can. We try to help the algorithm along by giving it a good state representation. Note, there are advanced techniques where the algorithm could learn the state representation as it interacts with the world but those are well beyond the scope of this class.

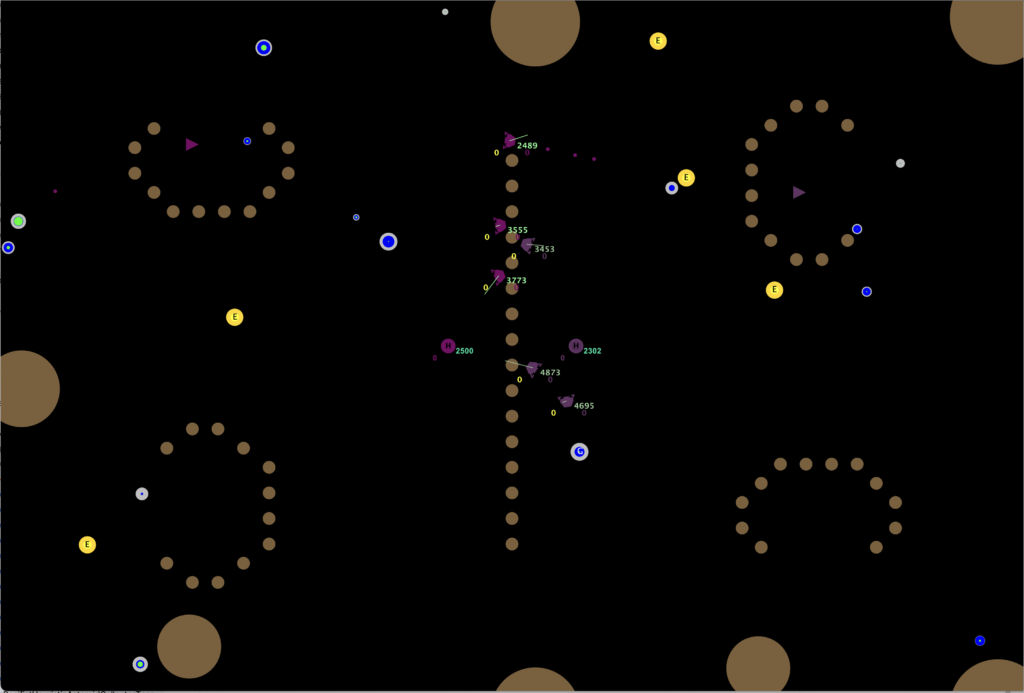

Let’s start simple, with a nice discrete grid or maze-type world such as the one shown below.

This image is from a paper on Quantum RL (I just liked the image as it was a simple clean maze).

What should the states be in this world? The most obvious answer is the individual grid squares and this would be the right answer! The world is small enough that you can fully represent this in memory and, unless someone tells you that the dynamics allow you to be in various locations in the grid squares, it is a clear answer to which square you are in.

Now imagine this world was more real-valued, which is to say that the robot still had a start state and a goal state but the locations were continuous. Since it is so familiar to use, let’s use our spacesettlers project environment as an example of this!

If our task is still to get from one location to another, we could imagine breaking it up into a series of discrete squares, just as we did in the maze above. This might work really well, especially if our task is literally to move from one location to another fixed goal location. As with the maze above, we have obstacles in our way and they can be handled in the same way (unreachable states). However, this approach is unlikely to work well if you want to go to a dynamic goal! For example, if you want to train how to get to the flag alcove in the upper right, this approach might work well. If you want your agent to be able to learn to simply get to the flag efficiently, no matter where the flag happens to be, you will need to work on a different representation.

Continuous state spaces

How do we handle a continuous state space where the task isn’t just to get to a fixed location? The RL algorithms we have discussed in class will not handle a continuous state space well. We need to think creatively and to think about what information will help the agent to achieve its goal. For example, sticking with the task of racing to the flag efficiently while running into minimal obstacles, we probably want our agent to have a representation that is more ego-centric. This means the representation is focused on the agent itself and the information around it that is needed to solve the problem. What relevant information do we need to solve this problem?

- Direction and distance to flag

- Is the straight line path between me and the flag blocked?

- Is there a free path for at least x pixels in each of 8 directions? (there are other ways to approach this information, this is just one idea)

One could then discretize this information, such that distance to flag is long, medium, or short and direction is one of the 8 directions. Perhaps one needs a more refined approach but this is just an example with the hopes of getting you thinking!

What makes the ideal or best state space?

The ideal state space is one that allows for a Markov representation of the state. But as mentioned above, this is rarely possible in the real-world! What I would say is you should aim to incorporate as much information as is needed by your agent but also make sure the states are discrete and will be revisited enough times to learn! The theory behind the algorithm requires infinite visits to every state and action pair. In reality, this is not necessary. But if you setup your state space such that you will only ever see a state a handful of times, you are very unlikely to ever learn a useful policy for that state. The answer is a balancing act of choosing the right level of abstraction: Do not choose a representation that is bogged down in too many details and do not choose one that is too high-level and lacks details or it can’t solve the problem.

Choosing actions

Choosing your action can be trivial (such as in the gridworld case, where it is typically up/down/right/left) or much more complicated. The real world is rarely so nice as to hand you a set of discrete actions all at the right level of abstraction!

As with the state space, the level of abstraction is critical. None of the RL algorithms we discuss in this class can handle continuous actions, so you will need to discretize the actions in some way if you are looking at a continuous action. As with the state space, you also do not want to choose an action discretization that yields actions you rarely get to explore repeatedly. If you only ever try the action once, you will not learn the ideal action from that state.

For our example space settlers task above, we likely want to choose to move to a fixed set of nearby locations. This gives us a clean and simple action set, though it may not give us as tight of a final path as we would like.

Exercise

Complete the exercise on RL state and action spaces